Most Langfuse vs. LangSmith comparisons treat them as interchangeable. They're not. Langfuse and LangSmith tell you what your LLM is doing in production. But there's a third contender that changes the question altogether: Cekura.

Langfuse vs. LangSmith vs. Cekura: At a Glance

Three tools, three different bets. Here's where each one holds up.

| 🔧 Tool | 🎯 Best For | 💰 Starting Price | ⚡ Key Strength |

|---|---|---|---|

| Langfuse | Open-source LLM observability, tracing, eval & prompt management | Hobby: Free Core: $29/month (unlimited users) | Self-hosted, framework-agnostic, no per-seat pricing, 80+ integrations |

| LangSmith | LangChain-native LLM debugging, tracing & deployment | Developer: Free Plus: $39/seat/month | Zero-setup LangChain/LangGraph tracing, native alerting, LangGraph deployment |

| Cekura | Voice & chat AI agent testing, QA, and production monitoring | Developer: $30/month (750 credits, 10 concurrent calls) Enterprise: custom | End-to-end voice/chat simulation, production call monitoring, and compliance-ready tooling |

Choose Langfuse if: You want full infrastructure control, self-hosting, and usage-based pricing that doesn't punish you for growing your team.

Choose LangSmith if: Your stack runs on LangChain or LangGraph, and you want tracing, alerting, and agent deployment without stitching tools together.

Choose Cekura if: You ship voice or chat AI agents and need simulation testing, regression coverage, and production monitoring in one place, with CI/CD integration included.

Before You Meet the Contenders

Langfuse and LangSmith are observability tools. They trace what your LLM does, track costs and latency, manage prompts, and run evaluations.

Cekura was built for conversational agents. It simulates conversations before they hit production and monitors quality once they do.

Three tools, one question: Is my agent actually working?

Langfuse: Open-Source LLM Observability

Langfuse is an open-source LLM observability platform with 23,100 GitHub stars and an MIT license. It connects natively with 50+ library/framework integrations and model providers, including LangChain, LlamaIndex, OpenAI, and Anthropic.

What stands out:

- Open-source and self-hostable with core features (tracing, evaluations, prompt management, cost tracking) matching the cloud version

- No per-seat fees: pricing is based on monthly usage units, so a team of 10 doesn't pay extra for headcount as long as usage is similar

- Covers tracing, prompt management, cost tracking, and built-in evaluations for LLM-based apps

- The free tier includes up to 50,000 units per month

What it's good for: Teams building text-based LLM apps, RAG pipelines, or multi-framework agent workflows who need full infrastructure control, data ownership, and predictable per-usage costs.

The no per-seat model makes it practical for growing engineering teams, since adding headcount doesn't immediately change your observability bill as long as usage stays similar.

Where it falls short: It was not built for conversational AI testing workloads that require full-session simulation. If you're shipping voice or chat agents at scale, you'll need to build custom tooling on top for end-to-end testing.

There's no native simulation engine, and many advanced alerting workflows still require going outside the UI or integrating with external monitoring tools.

LangSmith: The LangChain-Native Choice

LangSmith is the official observability layer for the LangChain ecosystem.

What stands out:

- Zero-setup tracing for LangChain and LangGraph applications in most workflows, enabled via environment variables alone

- Native alerting with configurable thresholds and built-in Slack, email, and webhook notifications, so you don't need external tooling for basic alerting workloads

- LangGraph Deployment lets you ship agents as production APIs without managing a separate LLM-agent backend; you still deploy the service to cloud platforms like AWS, GCP, or Render

- Prompt management with commit-based versioning, a built-in playground, and a community Prompt Hub

What it's good for: Teams fully committed to the LangChain ecosystem who want tracing, alerting, evaluation, and agent deployment in one place. The zero-setup tracing alone saves meaningful engineering time, and having deployment built into the same platform means one less tool to maintain.

The tighter your LangChain dependency, the more it earns its place.

Where it falls short: Outside LangChain, instrumentation becomes largely manual, and many of the setup advantages disappear.

The free tier caps at 5,000 traces per month with 14-day retention, which can exhaust quickly if you run intensive test suites in a single session. And if you need to run it on your own infrastructure, that conversation starts with a sales call.

Cekura: Purpose-Built QA for Conversational AI

Cekura is a testing and monitoring platform for voice and chat AI agents. It simulates real conversations before your agent ships and keeps tracking quality after it does. Backed by YCombinator, it has a client base spanning healthcare, financial services, and other regulated industries.

What stands out:

- Automated scenario generation across a wide range of user personas, including interrupters, non-native accents, and adversarial users, so you're testing against the kind of conversations that actually break agents

- You can import real-world production calls, extract edge cases, and turn them into reusable test scenarios, closing the loop between what happens in production and what you test next

- Turn-level metrics track latency, interruptions, tool calls, and instruction-following quality, plus deeper checks for hallucination, safety, and policy compliance

- CI/CD integration with GitHub Actions (and hooks into GitLab, Jenkins, and Azure DevOps) with pass/fail gates on every deploy

- Compliance-ready on Enterprise: SOC 2, HIPAA, GDPR, and self-hosted/VPC deployment options

What it's good for: Engineering teams shipping voice or chat agents who need to know the agent works before users find out it doesn't.

The CI/CD integration is where it pays off most. Every prompt change, model swap, or infra update runs through your test suite automatically before anything reaches production.

Where it falls short: Cekura is purpose-built for conversational AI. Teams building one-directional LLM apps or RAG pipelines without a conversational layer will find limited use for it.

Langfuse vs. LangSmith vs. Cekura: Feature Breakdown

This is where the three tools stop overlapping. Each one handles quality differently, and the gap between them is wider than most comparisons acknowledge.

Conversational AI Testing and Simulation

Langfuse: Covers this through LLM-as-judge evaluations and dataset management. You can build solid test suites, but scenario construction is manual, and there's no native simulation engine for multi-turn conversations. Works well for teams that already know what they want to test.

LangSmith: Handles evaluation runs and playground testing, with good visibility into LangChain agent behavior. Multi-turn simulation requires a custom setup. For teams on LangChain with straightforward testing needs, building that setup manually is usually worth it.

Cekura: Runs pre-production simulations across 50+ predefined personalities, including interrupters, non-native accents, adversarial inputs, and jailbreak attempts.

It also validates tool calls, instruction-following, and conversational quality turn-by-turn. Teams can import real production conversations and automatically extract test cases from them.

Winner: Cekura. Langfuse and LangSmith handle evaluation. Cekura handles the full testing cycle before anything reaches production.

Observability and Production Monitoring

Langfuse: Traces everything your LLM does, with cost tracking, latency metrics, and customizable dashboards. You can filter by user, session, or prompt version. Strong for text-based LLM apps. Alerting currently requires the Metrics API or webhook integrations rather than native UI-based rules.

LangSmith: Real-time tracing with pre-built and custom dashboards covering error rates, token usage, cost, and tool latency.

Native alerting lets you define thresholds and receive Slack, email, or webhook notifications without extra tooling. For general LLM apps, this is a genuine practical advantage over Langfuse's current setup.

Cekura: Monitors every production conversation in real time, tracking intent accuracy, tool-call reliability, latency, sentiment, and user experience at the start level.

Instant alerts fire on failures, and session replay with trend dashboards give you enough context to act fast. A failing production call can be turned directly into a regression test.

If you're wondering why tracing platforms alone aren't enough for conversational AI monitoring, Cekura's breakdown makes the case in detail.

Winner: Cekura for voice and chat AI. LangSmith for general LLM apps where out-of-the-box alerting matters. Langfuse is where self-hosting and cost control are the priority.

Prompt Management

Langfuse: Treats prompts as versioned, named objects with dynamic variables and configuration. Every version is numbered incrementally, and teams can tag versions with labels like production or staging to manage rollouts without touching the codebase.

The playground supports tool calling, structured output validation, and JSON schema checks. You can also reference other prompts from within a prompt directly in the UI.

LangSmith: Uses SHA-based commit IDs for prompt versions, which feels natural for developer-heavy teams. The Prompt Canvas includes an LLM assistant to help with iteration, and a community Prompt Hub lets you share and reuse prompts across projects.

Prompts are tightly coupled to LangChain's ChatPromptTemplate. Step outside that ecosystem, and the friction shows.

Cekura: Prompt management is not what Cekura is for. You can validate prompt changes through regression suites and CI/CD gates, but version control and playground-style iteration happen in your existing toolchain.

Winner: Langfuse for framework-agnostic teams. LangSmith for teams fully on LangChain who want playground-to-evaluation in one workflow.

CI/CD Integration for Automated Quality Gates

Langfuse and LangSmith embed quality checks into observability pipelines. Cekura makes passing a test suite the condition for shipping.

Langfuse: SDK-instrumented with OpenTelemetry support across Python, JavaScript, and Go. Pre-production quality checks require a custom setup, as there's no native mechanism to block a deployment based on test results.

LangSmith: Evaluation runs trigger programmatically, and the Plus plan includes LangGraph Deployment for packaging agents as deployable APIs. Teams can validate deployments and promote to production with automated evaluations built in.

Cekura: Connects with GitHub Actions, GitLab, Jenkins, and Azure DevOps. Gates based on accuracy, latency, or safety thresholds block deployment automatically, and teams can compare models under identical scenarios while locking versioned baselines.

Winner: Cekura for automated quality gates. LangSmith for tracing, evaluation, and deployment in one platform.

Developer Experience: SDKs, Instrumentation, and DX

How much setup stands between a code change and a useful signal is what separates these three.

Langfuse: Fully typed SDKs for Python and JavaScript/TypeScript built on OpenTelemetry. Async requests keep overhead low, making it well-suited for applications where response time matters.

LangSmith: Almost zero setup for LangChain and LangGraph teams. SHA-based versioning fits Git-oriented workflows, though outside LangChain, the onboarding advantage largely disappears.

Cekura: Centers on conversation simulation and quality dashboards. Prompt versioning and iteration happen in your own environment, while Cekura validates the results.

Winner: Cekura for pre-production gating. Langfuse for framework-agnostic instrumentation. LangSmith for fast onboarding within LangChain.

Pricing and Scalability

Langfuse: Free tier includes 50,000 units/month. Core is $29/month, Pro is $199/month, and Enterprise is $2,499/month. No per-seat fees at any tier.

Self-hosting is free under the MIT license, but running a production-grade deployment typically involves infrastructure and DevOps costs on the order of hundreds to a few thousand dollars per month, depending on scale and HA requirements.

LangSmith: Free Developer tier includes 5,000 traces/month with 14-day retention, tight enough that a few hundred test runs in a single session can exhaust it. The Plus plan is $39/seat/month and scales linearly.

A 10-person team is $390/month, with 10,000 traces included and overages at $0.50 per 1K additional traces. Self-hosting requires an Enterprise contract.

Cekura: The Developer plan is $30/month per user and includes 750 credits, 10 concurrent calls, 1 project, unlimited agents, and a 7-day free trial with 300 credits and no credit card required. If you need higher call volumes, compliance documentation, and dedicated support, that's the Enterprise tier.

Winner: Langfuse on cost efficiency and self-hosting flexibility. Cekura on ROI for teams where manual QA was previously eating weeks of engineering time.

What Real Users Say

The following reflects common themes from real user reviews on G2, Reddit, and Product Hunt.

Langfuse

Pro: "Highly recommended for anyone using complex chains or with user-facing chat applications, where latency becomes crucial." — David, Product Hunt

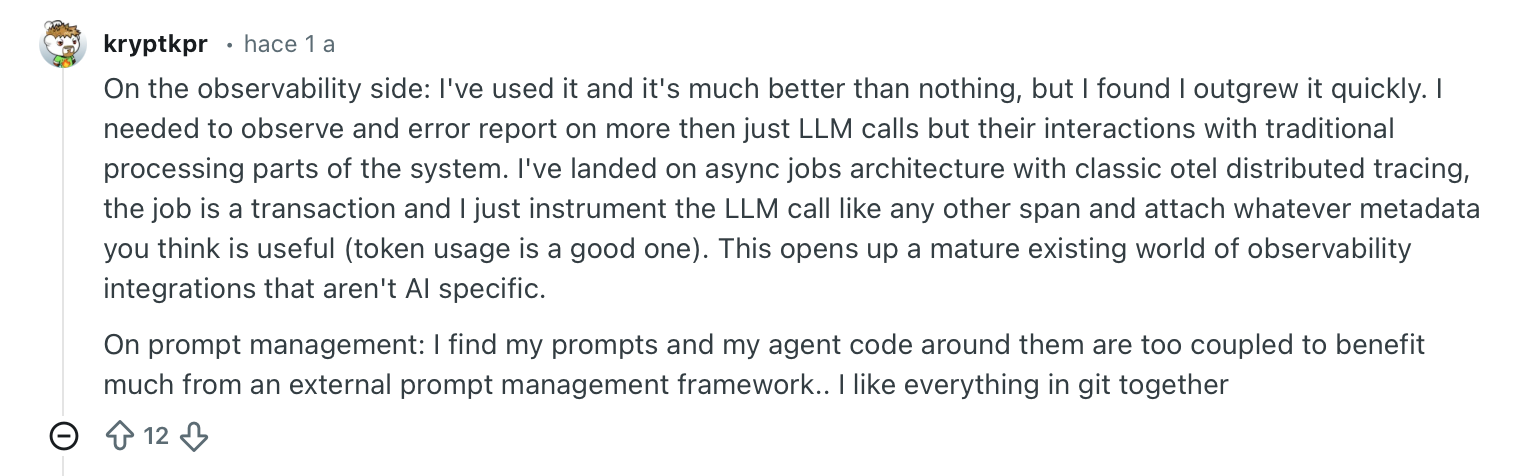

Con: "On the observability side: I've used it and it's much better than nothing, but I found I outgrew it quickly." — Verified User, Reddit

LangSmith

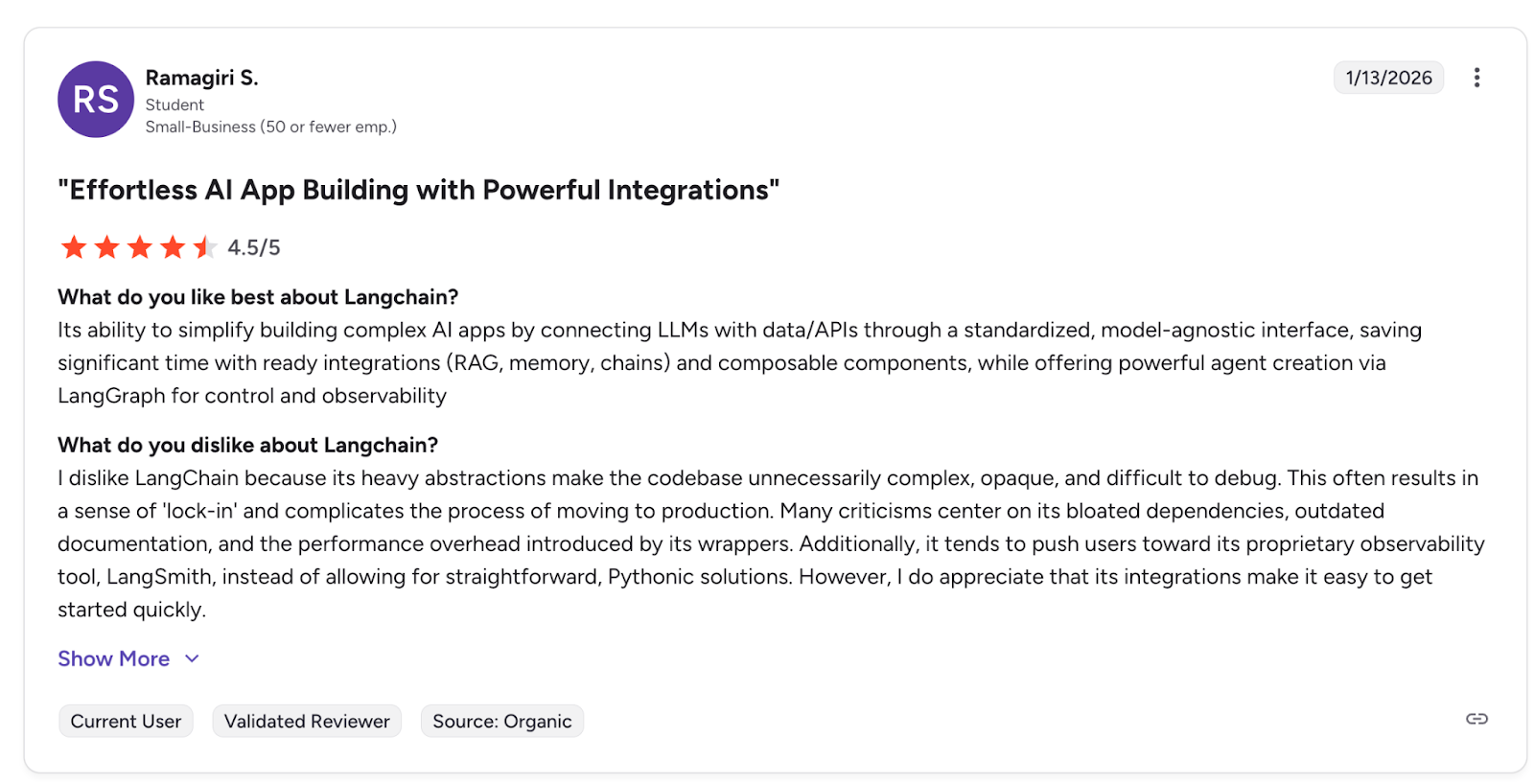

Pro: "Its ability to simplify building complex AI apps by connecting LLMs with data/APIs through a standardized, model-agnostic interface, saving significant time with ready integrations (RAG, memory, chains) and composable components." — Ramagiri S., G2

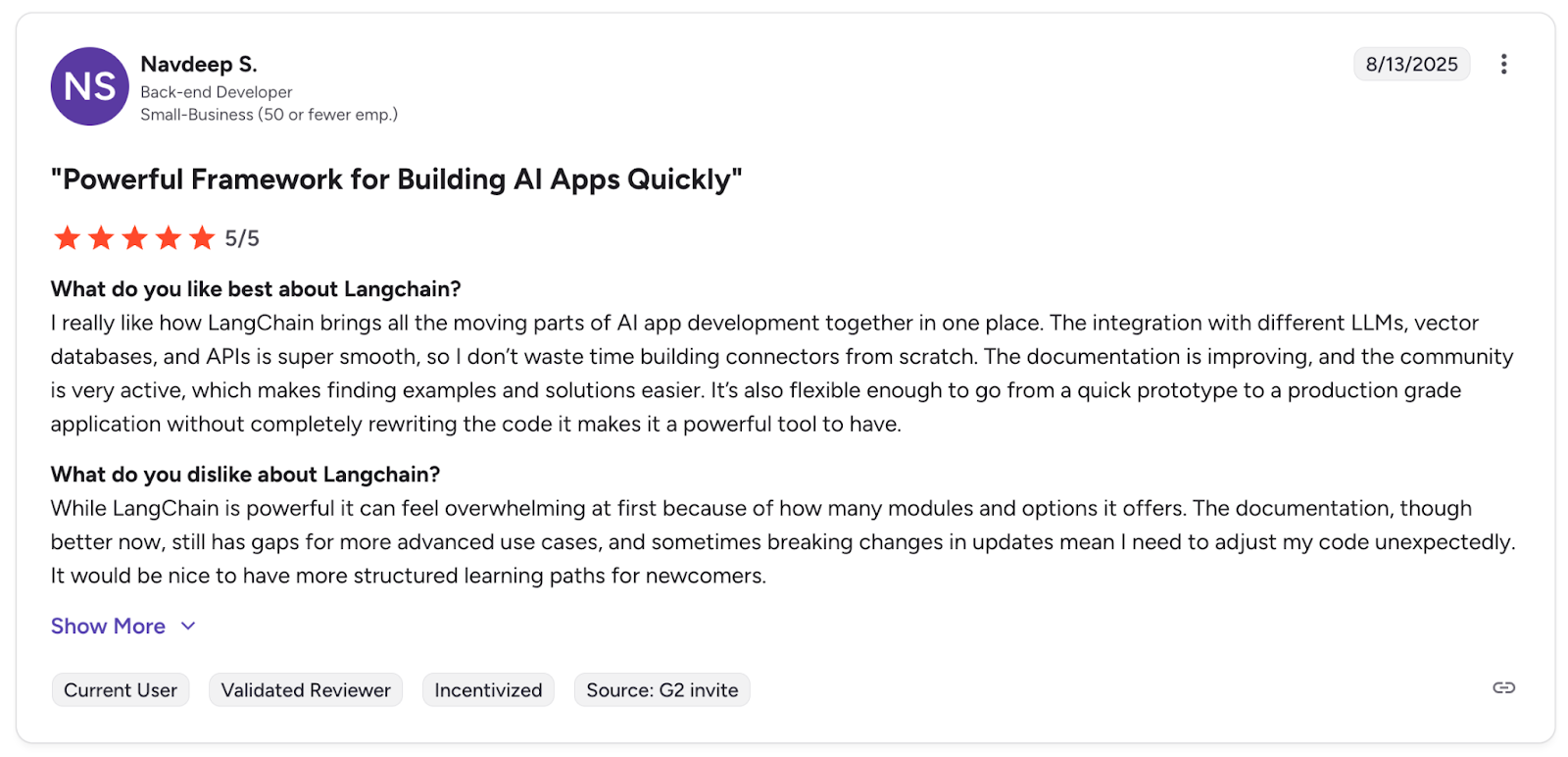

Con: "While LangChain is powerful it can feel overwhelming at first because of how many modules and options it offers." — Navdeep S., G2

Cekura

Pro: "Best Voice AI Testing platform on the market with an impeccable team! Lets gooo!" — Aditya Lahiri, Product Hunt

Pro: "Cekura sounds like a game-changer for Conversational AI development. I love the focus on reliability and speed—it's such a pain point in the industry." — Vladimir Kibanov, Product Hunt

Which Tool Should You Choose?

Langfuse and LangSmith answer the same question: What is my LLM doing in production? They do it well. But if your LLM is having conversations with real users, that question is not enough.

Choose Langfuse if you:

- Need full self-hosting control and want to avoid vendor lock-in

- Have a growing team where per-seat pricing adds up fast

- Are building text-based LLM apps, RAG pipelines, or agent workflows across multiple frameworks

- Need to keep your data fully on your own infrastructure for compliance reasons

Choose LangSmith if you:

- Run your stack on LangChain or LangGraph and want tracing that works out of the box

- Need native alerting without building custom webhook infrastructure

- Want to deploy LangGraph agents as production APIs without adding another tool

- Have a small team where per-seat costs remain manageable

Choose Cekura if you:

- Are deploying voice or chat AI agents into production

- Need automated simulation testing across realistic user personas before launch

- Want CI/CD quality gates that block bad models or prompt changes from shipping

- Lead an engineering team where manual QA is creating deployment bottlenecks

- Work in a regulated industry (healthcare, fintech, legal) requiring documented audit trails

My Final Verdict

Most teams shipping voice or chat agents start with manual QA: spot-checking calls, writing test scripts by hand, and waiting days to find out if a prompt change broke something. Cekura replaces that entire workflow. That's the win.

Langfuse's long-term bet feels solid, backed by approximately 23,100 GitHub stars and recently acquired by ClickHouse. It's grown into something genuinely complete, just factor in per-seat pricing and Enterprise-only self-hosting before you commit.

If conversational AI is where your team is headed, Cekura is worth a closer look.

Ready to Try Cekura?

If your team ships voice or chat AI agents, book a demo with Cekura and see how automated simulation testing fits into your current workflow.

Frequently Asked Questions

What Is the Main Difference Between Langfuse, Langsmith, and Cekura?

Langfuse and LangSmith handle tracing, cost tracking, prompt management, and evaluation for any LLM app. Cekura is purpose-built for conversational AI and adds pre-production simulation testing and turn-level production monitoring.

Is Langfuse Really Free to Use?

Yes, the cloud tier includes 50,000 units per month at no cost. You can also self-host it under the MIT license. Paid tiers start at $29/month with no per-seat fees.

Does Langsmith Work Without Langchain?

Yes, but outside LangChain and LangGraph, instrumentation becomes manual, and you lose the automatic tracing that makes it worth using.

Can Langfuse Be Self-Hosted for Free?

Yes, under the MIT license with the same features as the cloud version. Running it in production typically costs a few thousand dollars per month in infrastructure. LangSmith's self-hosted option requires an Enterprise contract.

How Does Cekura Handle Voice AI Testing?

Cekura generates synthetic user personas, including interrupters, non-native accents, and adversarial users. It also simulates full multi-turn voice conversations against your agent. It evaluates instruction-following, tool calls, latency, and interruption handling at the turn level.

Which Tool Is Best for Regulated Industries Like Healthcare or Finance?

Cekura supports SOC 2, HIPAA, and ISO compliance and already serves clients in healthcare and financial services. Langfuse's Enterprise tier covers the same certifications with full self-hosting. LangSmith covers SOC 2 Type II and GDPR, with HIPAA on Enterprise.