ElevenLabs is one of the most advanced conversational AI platforms for developer teams building voice agents. This ElevenLabs review examines its real capabilities, costs, and how it compares to the alternatives.

Quick Verdict

ElevenLabs combines voice AI and real-time agent infrastructure into one platform with sub-100ms latency.

It works well for teams that need expressive AI voices and flexible developer integrations. Pricing unpredictability and limited production monitoring are the main drawbacks.

What Is ElevenLabs Conversational AI?

ElevenLabs Conversational AI is a developer platform built around real-time voice agents. It helps product teams deploy AI-powered phone and web agents with features like turn-taking models, LLM integrations, telephony support, and RAG (real-time retrieval from your own knowledge base).

Key Features

Here are the main features that cover the full stack for building and deploying voice agents, from the voice layer to telephony, integrations, and compliance:

- Real-time voice synthesis: Generates human-sounding speech under 100ms, which is fast enough for live phone conversations without awkward gaps between turns.

- Turn-taking model: Detects when a user is actually done speaking by reading cues like "um" and "ah," so the agent waits or responds at the right moment instead of cutting the caller off.

- Bring your own LLM: Works with GPT-4, Claude, Gemini, or a custom model, so you wire in your preferred reasoning layer while ElevenLabs handles the voice output.

- RAG: During a call, agents query your own docs, FAQs, or internal knowledge base, so answers stay grounded in your actual content rather than generic training data.

- Multimodal support: A single-agent configuration handles voice, text, or both, so teams don't have to maintain separate bots for chat and phone channels.

- Telephony & omnichannel deployment: Supports inbound and outbound calling via Twilio, Vonage, or any SIP system, and also deploys on web and mobile through JS, Python, Swift, and React SDKs.

- Integrations: Connects to Salesforce, Zendesk, Stripe, HubSpot, and more, so agents can check balances, book appointments, and update records without leaving the conversation.

- Enterprise security: Covers SOC 2 Type II, HIPAA, GDPR, EU Data Residency, and Zero Retention mode (on the Enterprise plan), meeting the compliance requirements for healthcare, finance, and enterprise deployments.

ElevenLabs Conversational AI Pricing Plans Overview

| Plan | Price | Credits/month | Approx. agent minutes* |

|---|---|---|---|

| Free | $0/month | 10,000 | ~15 min |

| Starter | $5/month | 30,000 | ~45 min |

| Creator | $22/month (Currently $11 for the first month) | 100,000 | ~150 min |

| Pro | $99/month | 500,000 | ~750 min |

| Scale | $330/month | 2,000,000 | ~3,000 min |

| Business | $1,320/month | 11,000,000 | ~16,500 min |

| Enterprise | Custom | Custom | Custom |

*Agent minutes estimated at ~15 min per 10,000 credits (standard quality). LLM costs are billed separately and not included.

ElevenLabs Reviews: What Real Users Are Saying

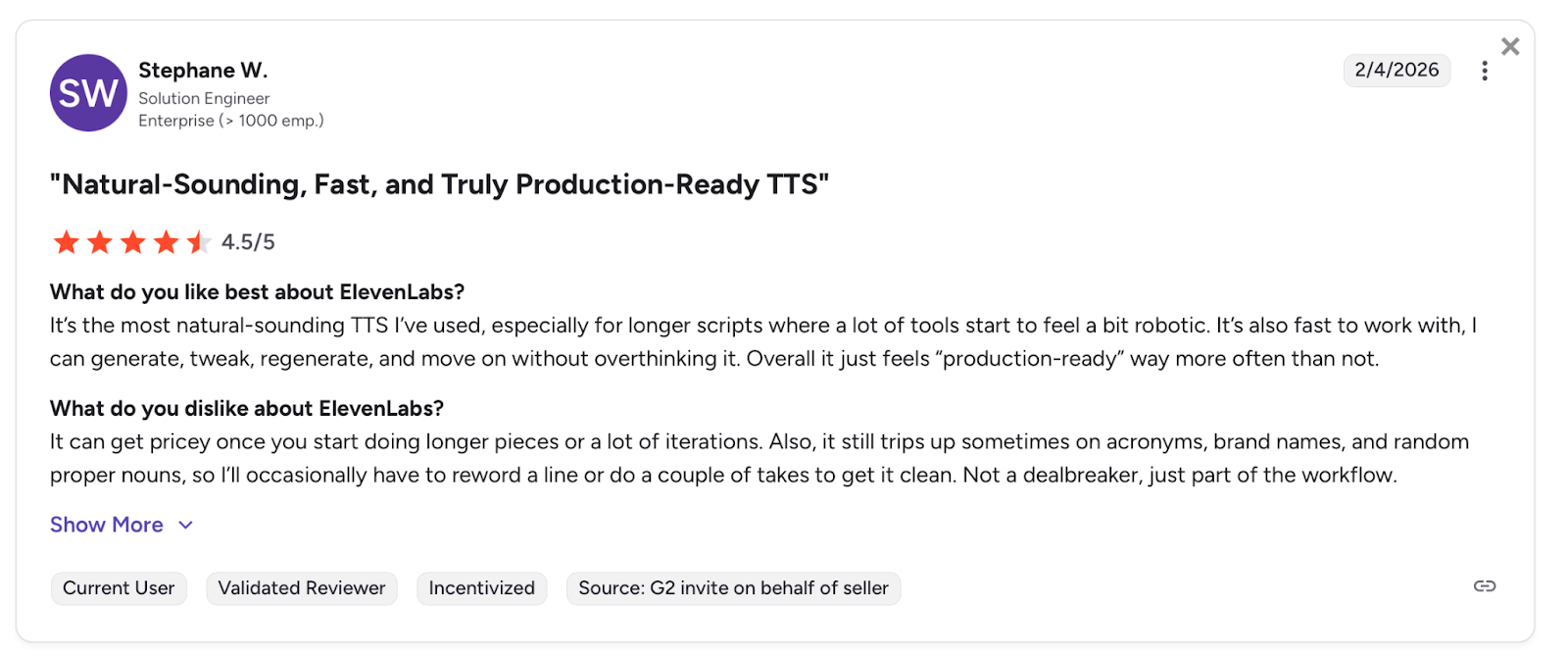

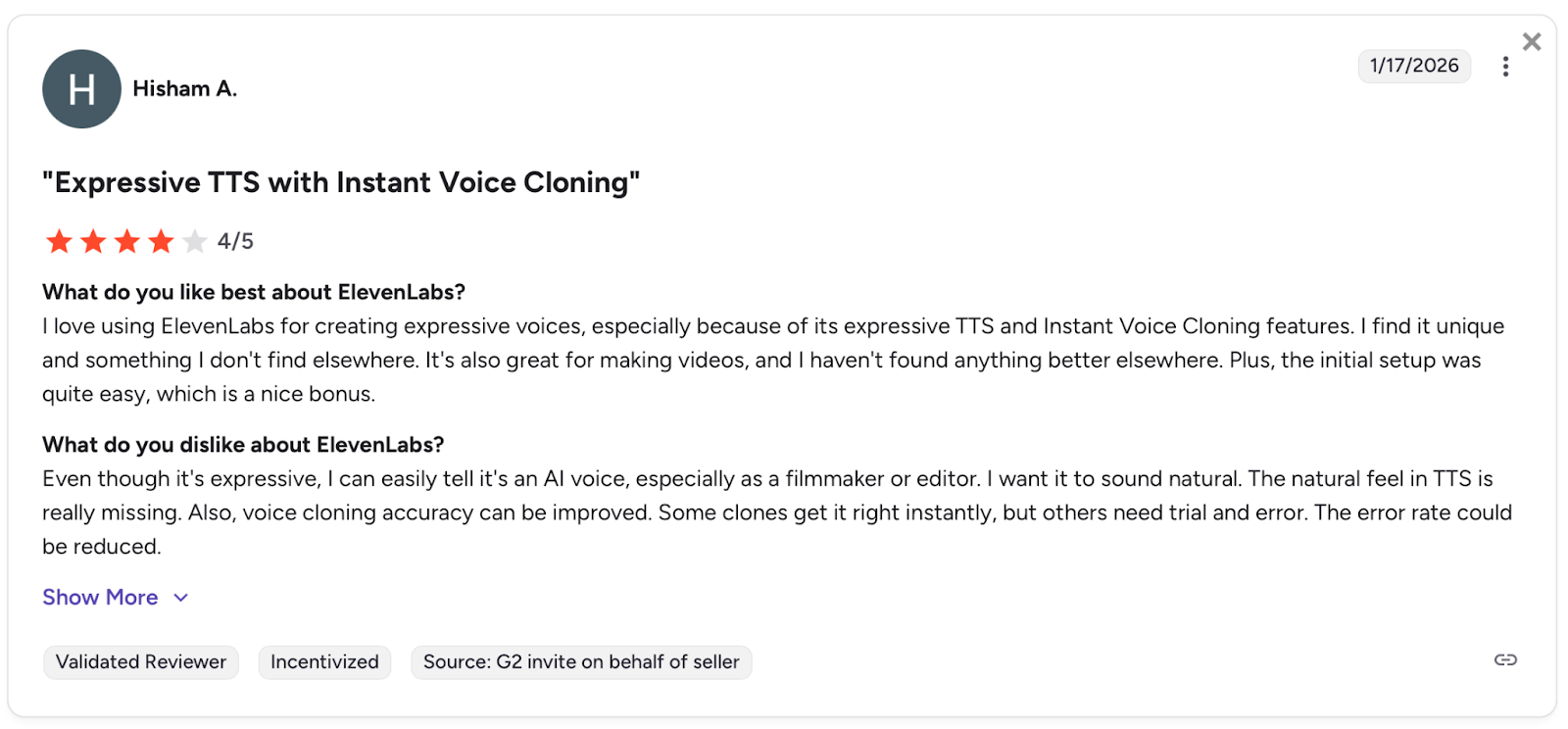

I looked at various ElevenLabs reviews on G2 from real users to understand what developer teams and enterprises experience day-to-day.

Pros

- Production-ready voice quality: Users describe the output as consistently natural, with prosody that holds up across longer scripts. One reviewer notes it feels production-ready for real-world applications, not just internal demos.

- API setup in minutes: One developer got the full API working in fifteen minutes, describing the setup as straightforward from start to finish.

- Low latency for real-time agents: A developer building NLP projects in Python notes that latency is quite low, directly benefiting real-time chatbot demonstrations.

- API is easy to integrate into real-world applications: One reviewer highlights that the API integrates well, with voice customization and stability settings that work reliably outside demo environments.

Cons

- Voice cloning needs trial and error: One reviewer notes that some clones get it right instantly, but others require multiple attempts before the output is usable in production.

- v3 model artifacts break API pipelines: Eleven v3 sometimes produces random artifacts at the start or end of audio, causing issues in API implementations. v2 is more stable but less realistic.

- Initial setup can be tough for new teams: A reviewer notes that understanding the platform takes time at first, and the interface could be clearer for teams onboarding without prior TTS experience.

- Workflow gets fragmented on longer projects: One reviewer notes the process feels slightly fragmented when iterating on updated text versions, with regeneration times adding up and version control lacking clarity.

Overall, users appreciate the voice quality and how quickly the API gets you to a working agent.

The frustration tends to come later: v3 artifacts that break pipelines mid-production, workflows that fragment on longer projects, and a learning curve that catches teams off guard after a smooth initial setup.

My Personal Take on ElevenLabs Conversational AI

Here's what stood out after working with the platform directly.

The Voice Quality Is the Real Deal

Your callers stay on the line because the voice doesn't give itself away. Most AI agents still have that flat, slightly off cadence that users pick up on immediately. ElevenLabs doesn't, and that changes how people engage with your agent from the first call.

Post-Launch Is Where It Gets Honest

Getting to a working prototype is fast. The turn-taking model handles conversation flow well, wiring in your own LLM rarely becomes a bottleneck, and the API setup is straightforward.

But shipping a prototype and running a production deployment are two different problems. Once your agent is live, there's no native way to know if it's following instructions correctly, handling edge cases, or holding up across thousands of calls.

Problems stay hidden until a user hits one, and without production monitoring, diagnosing what went wrong means manually reviewing recordings.

Teams that add Cekura on top of ElevenLabs close that gap: production monitoring surfaces failures automatically, and conversation replay shows you exactly what broke, without manual call review.

What Users Say Lines Up

Reviews show that Developers report the same pattern: quick setup, clean API, voice quality that holds up.

The friction starts after the prototype ships, when you realize the platform was built to help you launch, not to help you maintain what you launched.

For healthcare or finance teams, that gap needs to be addressed before anyone signs off on going live.

Is ElevenLabs Conversational AI Right for You?

ElevenLabs fits some teams well and leaves others wanting more. Here's how to tell which side you're on.

You will love it if:

- You're building a customer-facing voice agent where callers need to forget they're talking to an AI.

- You already have an LLM and need a well-documented voice layer to wire on top.

- You're a startup applying for the Grant Program to build and test without upfront costs.

- You're in healthcare or finance and need HIPAA-eligible deployments with signed BAAs (Business Associate Agreements).

You should avoid it if:

- You need built-in tools to monitor agent behavior in production, not just at launch. This gap is directly addressable: Cekura's ElevenLabs integration adds production monitoring, conversation replay, and automated regression testing on top of ElevenLabs without replacing it.

- You're running high-volume deployments outside the US, and latency under concurrent load is a concern

- You need predictable monthly costs without credit overage surprises

- You want a voice agent that works without API configuration or LLM setup

The Best ElevenLabs Alternative: Cekura

Cekura doesn't replace ElevenLabs. It makes the agent you built on ElevenLabs reliable once it's live. It's the automated QA layer that runs on top: simulating thousands of conversations before launch, monitoring every call in production, and replaying failures when something breaks.

- Testing at scale: Thousands of simulated conversations run before you go live, catching the edge cases that only surface when real people start talking to your agent.

- Interruption detection: When the agent talks over a user or cuts off mid-sentence, it's usually a timing problem nobody flagged. Cekura catches those patterns before they become a habit.

- Latency tracking: Measures where slowdowns originate in the pipeline so you know exactly what to fix after each deployment.

- Conversation replay: When something breaks in production, replay that exact exchange against your updated agent to confirm the fix actually worked.

- Custom evaluation: Score every conversation on accuracy, missed intents, and incorrect responses using your own criteria, tuning your LLM judges in Cekura's Labs feature until they match your ground truth.

- CI/CD pipeline integration: Every time you update a prompt, swap a model, or change a voice provider, Cekura runs your full test suite automatically before anything goes live.

- A/B testing across platforms and models: Compare multiple versions of your agent against the same scenarios, whether you are testing different platforms or model providers (LLM, STT, TTS), and review the results in one place.

- SOC 2 Type II certified: No raw transcript storage, verified security standards throughout.

Native integrations work out of the box for Retell, VAPI, ElevenLabs, LiveKit, and Pipecat, among others. You don't rebuild anything. You add a testing and monitoring layer on top of what you already have.

Ready to see how it works? Schedule a demo with Cekura.

Final Verdict

If you need human-sounding voice agents that your callers won't second-guess, ElevenLabs is the strongest option available. But if you want to know what those agents are actually doing once they're live and catch failures before your customers do, Cekura is the layer that completes the stack.

Frequently Asked Questions

Does ElevenLabs Conversational AI Support Phone Calls?

Yes, it supports inbound and outbound calls through Twilio, Vonage, Genesys, Telnyx, Plivo, and any SIP-compatible PBX. Batch calling is also available for automated outbound campaigns.

Can I Use My Own LLM With ElevenLabs Conversational AI?

Yes, ElevenLabs supports GPT-4, Claude, Gemini, and custom LLM integrations via server connection. You bring the reasoning layer, and ElevenLabs handles voice output, speech recognition, and turn-taking.

How Much Does ElevenLabs Conversational AI Cost per Minute?

Calls start at $0.10 per minute on the Starter, Creator, and Pro plans. The Business annual plan brings that down to $0.08 per minute. These prices don't include LLM costs.

Is ElevenLabs Conversational AI HIPAA Compliant?

Yes, but only at the Enterprise tier. HIPAA-eligible deployments require a signed BAA, which ElevenLabs only provides to Enterprise customers with Zero Retention Mode enabled.

What Is the Latency of ElevenLabs Conversational AI?

ElevenLabs targets sub-100ms latency on the Flash v2.5 model under standard conditions. Real-world performance varies by region and concurrent load, so teams should test before committing to a plan.

What Is the Difference Between ElevenLabs Conversational AI and Vapi?

The main difference is the focus. ElevenLabs owns the full voice stack and optimizes for voice quality. Vapi is an orchestration layer that lets you mix providers, including ElevenLabs. Choose ElevenLabs for voice realism. Choose Vapi for provider flexibility.